So you have your 3D scanner – maybe you bought one, maybe you turned a Kinect into one using the guide that I published recently. Now; the question becomes – what can you actually do with that acquired data? While I offered a short list in the guide, one of the options, reverse engineering, is a pretty in-depth process that warrants some extra explanation. While I can’t show you everything you’re going to need to do to retopologize scan data, I wanted to at least introduce you to some concepts you can use on the cheap, by using 3D scans of the motorcycle you see above.

The goal of reverse engineering a scanned part is to produce a smooth CAD model that can be easily modified and manufactured. A 3D scan is going to to be too rough and full of anomalies to create a cast part from; and of course you will be unable to modify any of it with any degree of precision. For the purposes of this tutorial, we will be using a 3D scan of a 2006 Kawasaki ZX-6R, provided by the Rochester Institute of Technology’s Electric Vehicle Team (Facebook). They scanned it using a Primesense Carmine 1.09 (Youtube), which is very similar in capabilities and operation to a Kinect 1.0.

The first thing you’ll want to do is choose your tools; it’s recommended to have both a solid modeler and a surface modeler for breadth of ability, but you can get away with one or the other (if in doubt, get a surface modeler). Either way, your modeling package needs to support the importation of polygon files, as all scan data will be in point cloud or polygon format. There are programs specifically made for this task, like Geomagic Design X (Website), but they can cost several thousand dollars – we’re going to learn how to do it on the cheap.

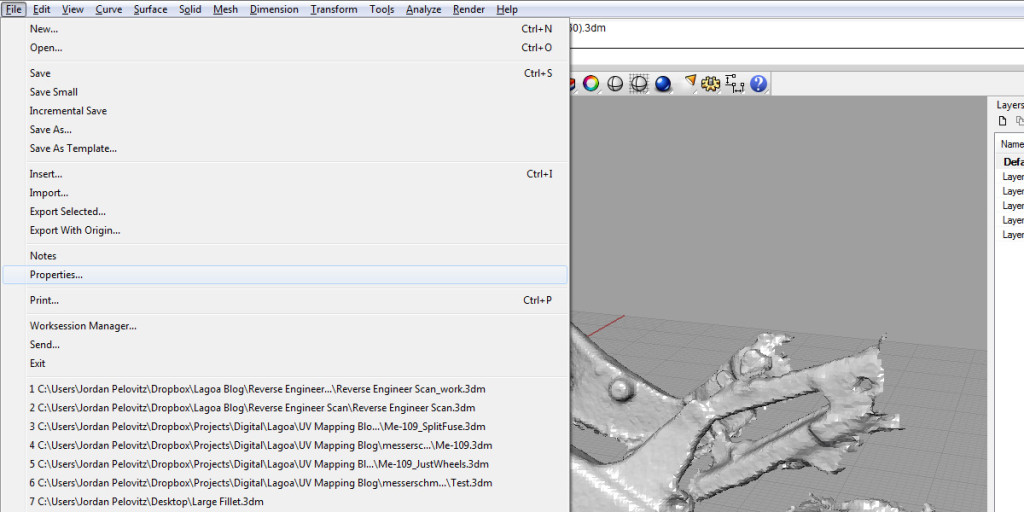

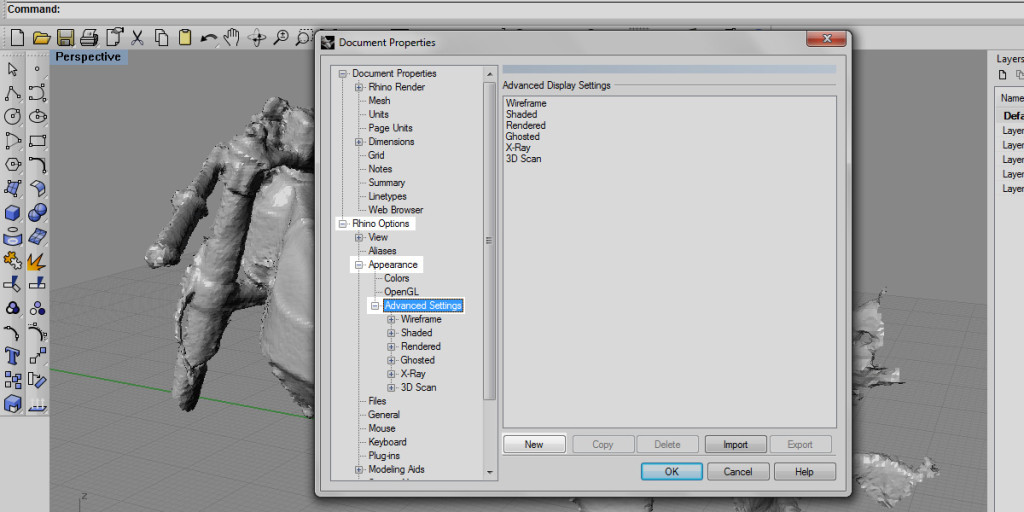

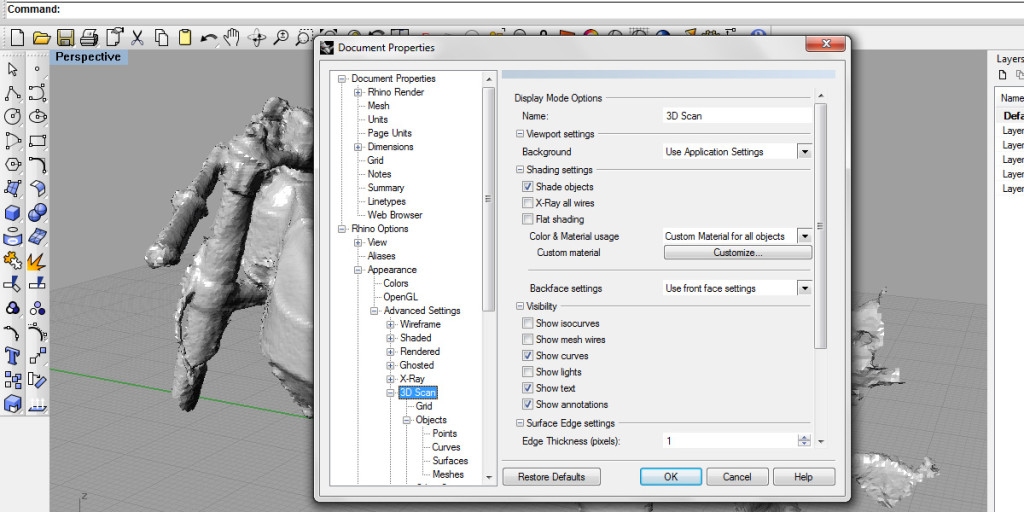

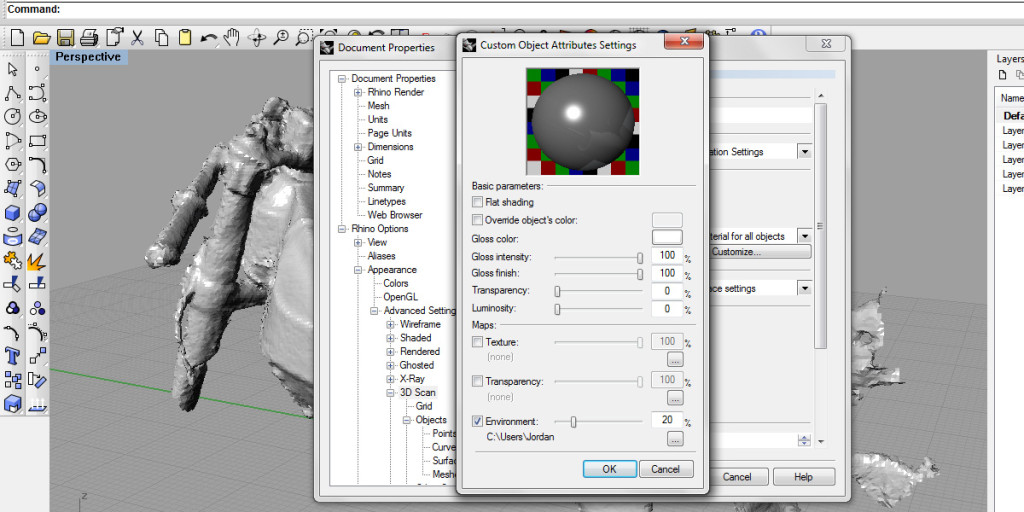

For this article, we will be using Rhino to build surfaces based on a scan and join them together. This tutorial assumes you have at least a basic understanding of Rhino’s interface, such as how to use the command line and move the camera. Before we get into any actual work, though, we need to create a custom display mode for Rhino; the ones that it ships with make it difficult to see the subtle changes in the scan data’s surface. Check out the gallery below for the deets on creating your own Rhino view modes.

Create a Rhino View Mode

Rebuilding the Model

1) Import your scan data and align it to the three major axis of your workspace using the Move and Rotate commands.

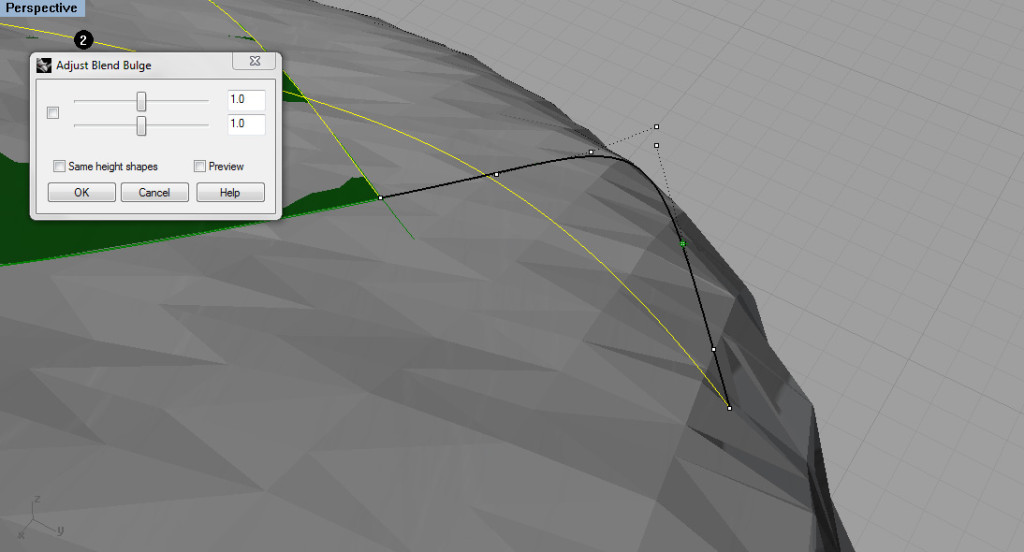

2) Map out where your surfaces are going to begin and end. Generally you want to avoid rebuilding fillets and surface blends by hand – you will get better, more predictable results by using Fillet and Blend Surface commands to bridge the gaps between major surfaces. In the sketch image above, I have printed out a screenshot of the model and traced over it. Blue and yellow are significantly different in their shape and major planar directions, so they will be made as two separate surfaces. The green surface, meanwhile, is a filleted blend between the two, and thus will be done using a Blend Surface command, instead of trying to create it manually. The red surface was originally going to be separate but I decided to do it with the others instead.

3) Lay out 2D curves in the side view. Make sure that they follow the pattern you drew previously.

4) Use the Project command to project your curves onto the 3D Scan. The project command will project in both directions and on all available geometry; delete what you don’t need.

5) Rebuild these projected curves into usable ones. A straight projection will be unusable; it will be very rough and have potentially hundreds of control points (CPs); you want to minimize the amount of CPs in any line you draw. You have two options to rebuild these lines; you can either select them and use the Rebuild command for a quick but less precise result, or you can use Object Snap with End and Near selected, and retrace the lines using the Interpreted Curve command for a more precise, manual result. You can also use Point Snap to snap to individual vertices on the scan.

Remember to try and create fillets by using Fillet or Blend Surface. In this case, by using the Blend Surface (BlendSrf) command, I can get much tighter control over the way the surfaces blend together – if I tried to wrap the curves around the fillet, I would end up with difficult to control curves with bulges in weird places.

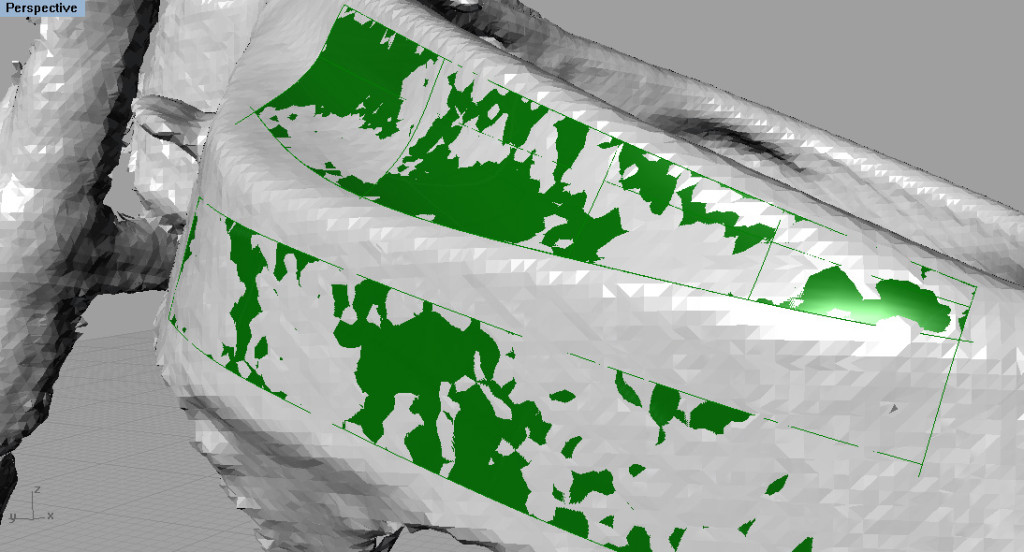

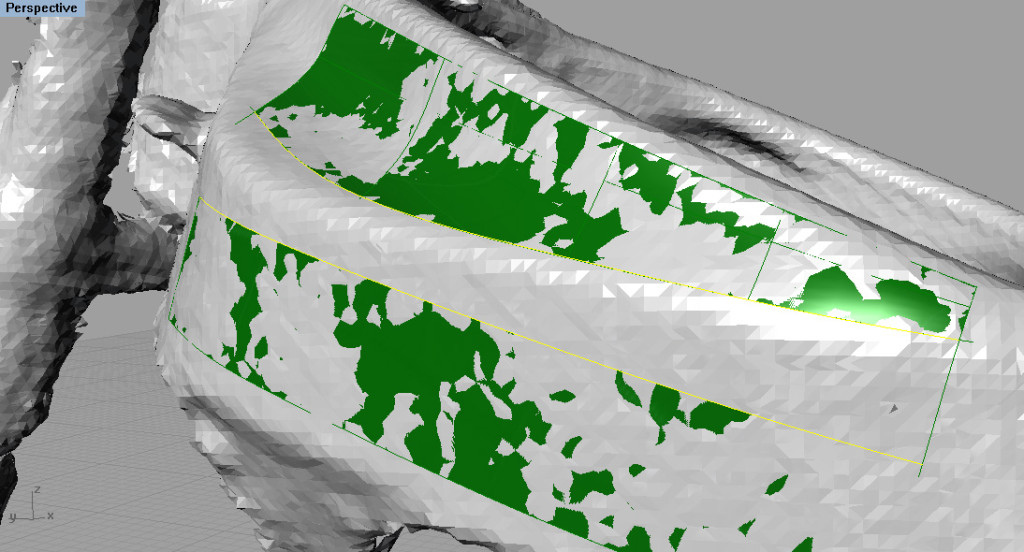

7) Check your surfaces for accuracy. You can do this by going to Analyze → Surface → Point Set Deviation in your toolbar at the top of the program. Select the polygon mesh and the surfaces that you wish to test.

When the analysis first comes up, it may look pretty bad, filled with red. Don’t worry; the initial parameters are pretty stringent. If you’re using millimeters, try changing your dimensions so that a really good point is about .01mm away from the reconstructed surface, a bad point is .1mm, and all points over 1mm should be ignored. Hit Apply to see your results! Pull out a ruler to see just how tiny .01mm is – this should be good enough for most purposes.

This concludes the basics of reconstructing geometry using connected surfaces! If you have any questions or want more information, please post below and I’ll try to answer them as best we can!